Navigating System Testing: An Example-Driven Approach

In the article ‘Navigating System Testing: An Example-Driven Approach,’ we delve into the intricacies of system testing through a practical, example-based lens. We explore the Test-Driven Development (TDD) cycle, the pursuit of comprehensive test coverage, the nuances of state transition testing, the iterative nature of enhancing test cases, and strategies for assessing and improving testing methodologies. This hands-on guide aims to provide valuable insights for developers and testers looking to refine their system testing strategies and ensure the delivery of robust, high-quality software.

Key Takeaways

- Embrace the Red-Green-Refactor cycle of TDD, starting with failing tests to drive development and ensure clean, efficient code.

- Strive for wide test coverage by incorporating unit, integration, functional, and performance tests, utilizing tools to monitor code coverage.

- Apply state transition testing for applications with specific input sequences, using both positive and negative test values to evaluate behavior.

- Iteratively develop and enhance test cases, leveraging error guessing to identify and fix potential issues, thereby refining the test suite.

- Regularly assess the completeness of your test suite and embrace continuous improvement with custom tips and methodologies for better testing.

Understanding the Test-Driven Development Cycle

The Red-Green-Refactor Principle

The Red-Green-Refactor cycle is a foundational concept in Test-Driven Development (TDD). First, a developer writes a test that defines a desired improvement or new function, which initially fails (Red). This failure is expected as the corresponding functionality has not been implemented yet.

Next, the developer writes the minimum amount of code required to pass the test (Green). This step is not about crafting a perfect solution but ensuring that the test passes. For example, returning a hardcoded value might be sufficient at this stage.

Finally, the developer refactors the code to improve its structure and efficiency while keeping the test suite green. This may involve cleaning up code smells, optimizing performance, or improving readability. The cycle then repeats with a new test, gradually building up both the functionality and quality of the code.

Here are the steps in the Red-Green-Refactor cycle:

- Write a failing test (Red)

- Implement the simplest code to pass the test (Green)

- Refactor the code for better quality (Refactor)

- Repeat the cycle with a new test

Running Initial Tests and Embracing Failures

When embarking on the Test-Driven Development (TDD) journey, the initial step is to run the tests and embrace the failures that come with it. This phase is crucial as it sets the foundation for the iterative process that follows. Running a test for the first time often results in a failure, which is an expected outcome in TDD. This failure is not a setback but rather a guidepost indicating the direction of the next coding steps.

The process of running tests and seeing them fail can be summarized in the following steps:

- Write a failing test case that reflects the desired functionality.

- Execute the test suite to validate the current code against the new test.

- Observe the failure and analyze the results to understand the deficiencies in the code.

- Implement the simplest code changes to address the test case.

- Re-run the tests to ensure that the new code satisfies the test conditions.

By adhering to this method, developers build a robust suite of tests that not only validates the required functionality but also ensures that the simplest solutions are employed to meet these requirements. It’s a disciplined approach that fosters both quality and efficiency in software development.

Refactoring for Clean and Efficient Code

Once the tests are passing, the focus shifts to refactoring the code. This is a critical step to ensure that the codebase remains maintainable and scalable. Refactoring involves cleaning up the code without changing its external behavior, improving its internal structure.

During refactoring, developers should aim to:

- Simplify logic and improve code readability.

- Remove any duplication and ensure code follows the DRY (Don’t Repeat Yourself) principle.

- Optimize performance by eliminating unnecessary computations.

- Ensure that the code adheres to established coding standards and best practices.

It’s important to re-run tests after each refactoring change to confirm that no functionality has been inadvertently affected. This iterative process of testing and refactoring gradually enhances the code quality, making it more robust and easier to extend in the future.

Achieving Comprehensive Test Coverage

Building a Suite of Functional Tests

Developing a comprehensive suite of functional tests is a critical step in ensuring that your software behaves as expected under various scenarios. Functional tests focus on the user’s perspective, verifying that the system performs its intended functions correctly. These tests are essential for identifying discrepancies between the actual and expected behavior of the system.

To craft reliable and user-focused functional test cases, it’s important to consider the end-to-end functionality of the application. This involves testing the application’s interfaces, APIs, databases, security, client/server communication, and other integral parts of the software. A well-structured functional test suite can serve as a powerful tool for regression testing, helping to catch bugs early and reduce the risk of defects making it to production.

Here are some key steps to consider when building your functional test suite:

- Define clear and concise test objectives.

- Design test cases that cover all functional requirements.

- Prioritize test cases based on risk and impact.

- Execute tests systematically and record results.

- Regularly review and update test cases to adapt to changes in the application.

Remember, the goal is to create a test suite that not only verifies the functionality but also enhances the overall quality of the software. By following these steps and incorporating feedback from the testing process, you can continuously improve your functional testing approach.

Incorporating Various Testing Types for Full Spectrum Coverage

To optimize the software testing process, it’s essential to incorporate a variety of testing types. Comprehensive testing strategies are crucial for ensuring that all aspects of the software are thoroughly vetted. This includes both manual and automated testing methods, which together provide a robust framework for identifying and addressing potential issues.

A full spectrum coverage approach typically involves:

- Unit testing to validate individual components

- Integration testing to ensure modules work together

- Functional testing for verifying business requirements

- Performance testing to assess responsiveness and stability

- Security testing to check for vulnerabilities

- Usability testing to evaluate user experience

By embracing these diverse testing methodologies, teams can create a more resilient and high-quality software product. The agile nature of this approach allows for efficient management of test environments and effective execution of test cases, leading to an enhanced software quality.

Utilizing Tools for Ensuring Code Coverage

In the quest for quality software, code coverage tools play a crucial role. These tools provide insights into which parts of the code are being exercised by tests, and which areas may need more attention. By highlighting untested code, developers can focus their efforts on improving test coverage, thereby enhancing the reliability and effectiveness of the application.

Several tools are available to assist in this endeavor, each with its own set of features and benefits. For instance, some tools integrate seamlessly with continuous integration pipelines, providing real-time feedback on code coverage. Others offer detailed reports that can guide future test development. Here’s a brief overview of popular code coverage tools:

- Playwright

- JUnit

- Selenium

- Postman

It’s important to select a tool that aligns with your project’s needs and integrates well with your existing testing framework. As you incorporate these tools into your testing strategy, remember that while high code coverage is desirable, it is the quality of the tests—not just the quantity—that ultimately determines the robustness of your software.

Leveraging State Transition Testing

Understanding State Transition in Application Behavior

State transition testing is pivotal in understanding how an application responds to a variety of input conditions. It focuses on the changes in state that occur as a result of these inputs. This technique is particularly useful when the application’s behavior is dependent on a sequence of events or when dealing with a finite set of possible inputs.

When applying state transition testing, it’s essential to consider both positive and negative input values. This dual approach ensures that the application’s behavior is thoroughly evaluated, not just under expected conditions but also when faced with unexpected or erroneous inputs.

Here’s a simplified example of a state transition table for an authentication process:

| Attempt | Correct PIN | Incorrect PIN |

|---|---|---|

| 1st | Access | 2nd attempt |

| 2nd | Access | 3rd attempt |

| 3rd | Access | Account blocked |

In this table, the state of the system transitions from one attempt to the next, with different outcomes based on the correctness of the PIN entered. After the third incorrect attempt, the account is blocked, showcasing a critical state transition.

Applying Positive and Negative Test Values

In the realm of system testing, the application of positive and negative test values is a critical step. Positive test values are those that fall within the expected range that the system should handle correctly. Conversely, negative test values are those that fall outside of the expected range and are used to test the system’s ability to handle error conditions.

For instance, if an input field accepts values from 1 to 10, positive test values might include 2, 5, and 8, while negative test values could be -1, 0, 11, and 15. This approach ensures that both valid and invalid inputs are thoroughly tested, revealing how the system behaves under various conditions.

Here’s an example of how values from different equivalence classes can be selected for testing:

- Valid Equivalence Classes: 1 to 10, 20 to 30

- Invalid Equivalence Classes: — to 0, 11 to 19, 31 to —

| Equivalence Class | Test Values |

|---|---|

| — to 0 | -2 |

| 1 to 10 | 3 |

| 11 to 19 | 15 |

| 20 to 30 | 25 |

| 31 to — | 45 |

By systematically applying these guidelines to both input and output conditions, testers can ensure that the system’s response to various data ranges is as expected, thereby enhancing the robustness of the application.

Guidelines for Effective State Transition Testing

When applying state transition testing, it’s crucial to recognize its suitability for scenarios with a finite set of input values. This technique excels in examining sequences of events within the application, revealing how input variations trigger state changes. For instance, consider an application that transitions between states such as ‘Logged Out’, ‘Logging In’, and ‘Logged In’. Testing should encompass both positive and negative input values to thoroughly assess the system’s behavior.

To ensure effective state transition testing, follow these guidelines:

- Identify and record the different states of the system.

- Use decision tables for functions responding to multiple inputs or events.

- Apply error guessing based on historical data and knowledge of common implementation errors.

Remember, the goal is to determine the system states and understand how the application responds to a variety of input conditions. By meticulously documenting the states and employing a structured approach, testers can provide valuable insights into the application’s robustness and reliability.

Iterative Development and Test Case Enhancement

The Iterative Nature of Test-Driven Development

Test-Driven Development (TDD) is inherently iterative, a process that aligns perfectly with the agile methodology of software development. At its core, TDD involves a continuous cycle of testing, coding, and refactoring. This cycle ensures that development is both incremental and integrative, allowing for the gradual improvement of the codebase with each iteration.

The mantra of ‘Red – Green – Refactor‘ is not just a set of steps; it’s the philosophy that drives TDD. By adhering to this principle, developers write a failing test (Red), implement just enough code to pass the test (Green), and then refine the code without altering its functionality (Refactor). This cycle repeats, gradually building a robust suite of tests that validate the growing features of the application.

The iterative nature of TDD fosters a disciplined approach to coding, where the focus is on small, manageable changes that are continuously tested and integrated. Below is a simplified representation of the TDD cycle:

- Write a failing test (Red)

- Make the test pass (Green)

- Refactor the code (Refactor)

- Repeat the cycle

As the cycle continues, the codebase evolves in a controlled and predictable manner, reducing the likelihood of introducing bugs and ensuring that each new feature is thoroughly tested before it is added.

Adding Complexity Through Additional Test Cases

As the development cycle progresses, the addition of more complex test cases becomes crucial. Iterative development is a technique where each cycle builds upon the previous one, enhancing the robustness of the application. By introducing new test cases, developers can uncover additional edge cases and scenarios that may not have been considered initially.

To illustrate, consider the process of adding a second test case in a TDD environment:

- Create a new test method for the additional scenario.

- Implement the test, ensuring it captures the new complexity.

- Run the test to observe failure (red), then modify the code to pass (green).

- Refine the code through refactoring while maintaining test success.

This approach ensures that each new test case not only increases coverage but also contributes to the overall quality and maintainability of the code. The table below summarizes the iterative steps:

| Step | Action |

|---|---|

| 1 | Add new test case |

| 2 | Implement & run test |

| 3 | Observe failure (red) |

| 4 | Modify code (green) |

| 5 | Refactor & maintain |

By continuously iterating and enhancing test cases, developers can systematically drive out more complex behavior and ensure that the software evolves in a controlled and predictable manner.

Utilizing Error Guessing to Refine Test Cases

Error guessing is a technique that leverages the tester’s intuition and experience to predict where bugs might occur. By anticipating potential errors, testers can write targeted test cases that are more likely to uncover hidden issues. This method is particularly useful for refining test cases in areas that automated testing may overlook.

To effectively apply error guessing, testers should consider the following points:

- Draw on past experiences with similar applications.

- Have a deep understanding of the system under test.

- Be aware of common implementation mistakes.

- Recall areas that have caused issues in the past.

- Review historical data and previous test results.

While error guessing is not a systematic approach, it can be a powerful tool when combined with other testing techniques. It is often the tester’s insight that uncovers the most elusive defects.

Assessing and Improving Your Testing Strategy

Evaluating the Completeness of Your Test Suite

Assessing the completeness of your test suite is crucial to ensure that all aspects of the application are thoroughly tested. A comprehensive test suite should cover a variety of test types and scenarios. This includes unit tests, integration tests, functional tests, and performance tests, each serving a unique role in the overall testing strategy.

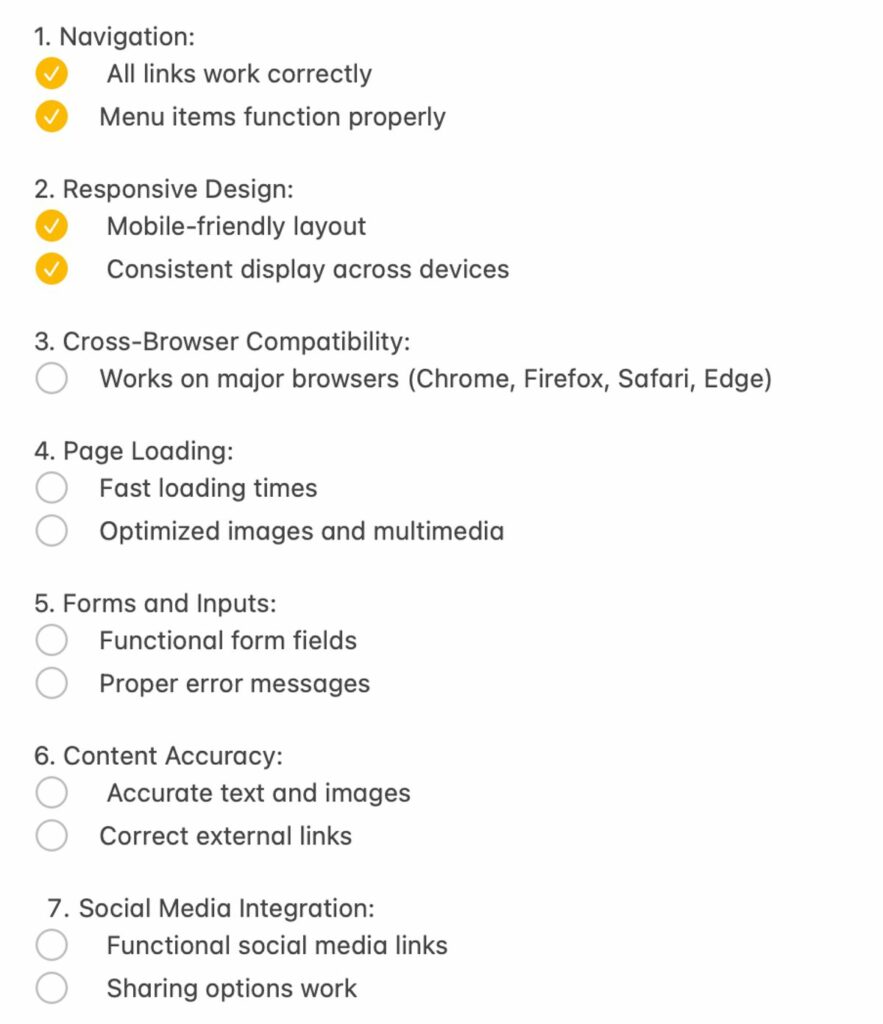

To evaluate your test suite, consider the following checklist:

- Have all critical functionalities been covered by tests?

- Are there tests for both expected and unexpected user behaviors?

- Is there a balance between automated and manual testing efforts?

- Have performance benchmarks been established and tested against?

- Are tests regularly reviewed and updated to reflect changes in the application?

Regularly revisiting and refining your test suite is essential for maintaining its effectiveness. Utilizing tools for code coverage can help identify untested parts of your codebase, guiding you towards areas that may require additional attention. By continuously improving your test suite, you can foster a robust and reliable testing process.

Custom Tips for Enhancing Testing Approaches

To elevate your testing strategy, it’s essential to tailor your approach to the specific needs of your project. Here are some custom tips to enhance your testing methodologies:

- Prioritize test cases based on risk and impact to ensure critical functionalities are thoroughly tested.

- Leverage historical data and past test results to anticipate areas of potential failure.

- Incorporate user feedback into your test cases to align them more closely with real-world usage.

- Optimize test environments for agility, allowing for efficient management and execution of test cases.

By implementing these targeted strategies, you can significantly improve the quality and effectiveness of your software applications.

Continuous Improvement in Testing Methodologies

The pursuit of excellence in testing is an ongoing journey, one that requires a commitment to continuous improvement. As technology evolves and customer expectations rise, integrating continuous testing and test data management becomes crucial for businesses to stay competitive. This integration ensures that feedback is received in real-time and defects are corrected promptly, aligning with the iterative nature of software development.

A holistic approach to testing is not just about covering various types of tests; it’s about ensuring quality assurance throughout the development lifecycle. From unit to performance testing, the goal is to maintain a wide coverage that guarantees the delivery of high-quality software. To achieve this, a blend of manual and automated testing strategies is essential, utilizing the best of test automation frameworks and tools.

Assessing and improving your testing strategy is a proactive measure to enhance software quality. Consider taking a structured assessment to gauge the completeness of your strategy and receive custom tips for improvement. Remember, the goal is not just to test, but to test smarter and more effectively.

Conclusion

In conclusion, navigating system testing with an example-driven approach offers a structured and effective method for ensuring software quality. By iteratively running tests, observing failures, implementing fixes, and refining the code, developers can build a robust suite of tests that cover a wide range of scenarios. This article has highlighted the significance of various testing techniques, such as state transition and error guessing, and the importance of a holistic testing strategy that includes unit, integration, functional, and performance testing. As we have seen, leveraging tools like IntelliJ IDEA and adhering to guidelines can streamline the testing process and lead to more reliable and maintainable software. Ultimately, the goal is to create systems that not only meet the specified requirements but also deliver a seamless and bug-free user experience.

Frequently Asked Questions

What is the Red-Green-Refactor principle in TDD?

The Red-Green-Refactor principle is a cycle in Test-Driven Development (TDD) where a test is first run and expected to fail (Red), then the code is written to make the test pass (Green), and finally, the code is refactored to improve its structure while keeping the test passing.

How do I achieve comprehensive test coverage?

Comprehensive test coverage can be achieved by building a suite of functional tests and incorporating various types of testing, such as unit, integration, and performance testing. Utilizing tools to ensure code coverage can help identify untested parts of the codebase.

What is State Transition Testing and how is it applied?

State Transition Testing is a technique used to test the behavior of an application by providing different input conditions in a sequence, assessing both positive and negative test values. It is useful for testing applications with a limited set of input values and for evaluating sequences of events.

What is the role of iterative development in enhancing test cases?

Iterative development allows for the gradual building of a comprehensive test suite. As functionality is added or modified, new test cases are created and existing ones are refined, often using error guessing to anticipate and test for potential issues.

How can I assess the completeness of my testing strategy?

To assess the completeness of your testing strategy, evaluate your test suite to ensure it covers all functionalities and edge cases. Consider taking assessments or using tools that provide insights into your testing coverage and effectiveness.

What are some guidelines for effective error guessing in test case enhancement?

Effective error guessing should be based on previous experience with similar applications, understanding of the system under test, knowledge of typical implementation errors, recollection of previously problematic areas, and evaluation of historical data and test results.